Progress in artificial intelligence reached new levels in November 2022 with the public launch of ChatGPT. Within seconds, the OpenAI chatbot can answer virtually any question, put together university essays, or come up with recipes based on whatever you have in your kitchen at any given time.

But can artificial intelligence emulate a friendship? Popular messenger platform Snapchat certainly thinks so, based on the release of its very own chatbot, "My AI". The bot uses ChatGPT technology to create the perfect friend for the app's user base, which is primarily made up of young people.

In April 2023, most Snapchat users received a notification that "My AI" had been added to their friends list. The bot is marketed as an easily customisable "friend" who can be available 24/7, responding to users at the click of a button. Is this a dream come true for users needing a shoulder to lean on?

Not entirely, says Igor Loran, psychologist at Bee Secure and the youth counselling service Kanner-Jugendtelefon (KJT). "This is an artificial friend, a virtual assistant you could perhaps ask for recommendations, but it can never replace a real friend. Understanding and empathy, as you might find in a real person, cannot be emulated by 'My AI'."

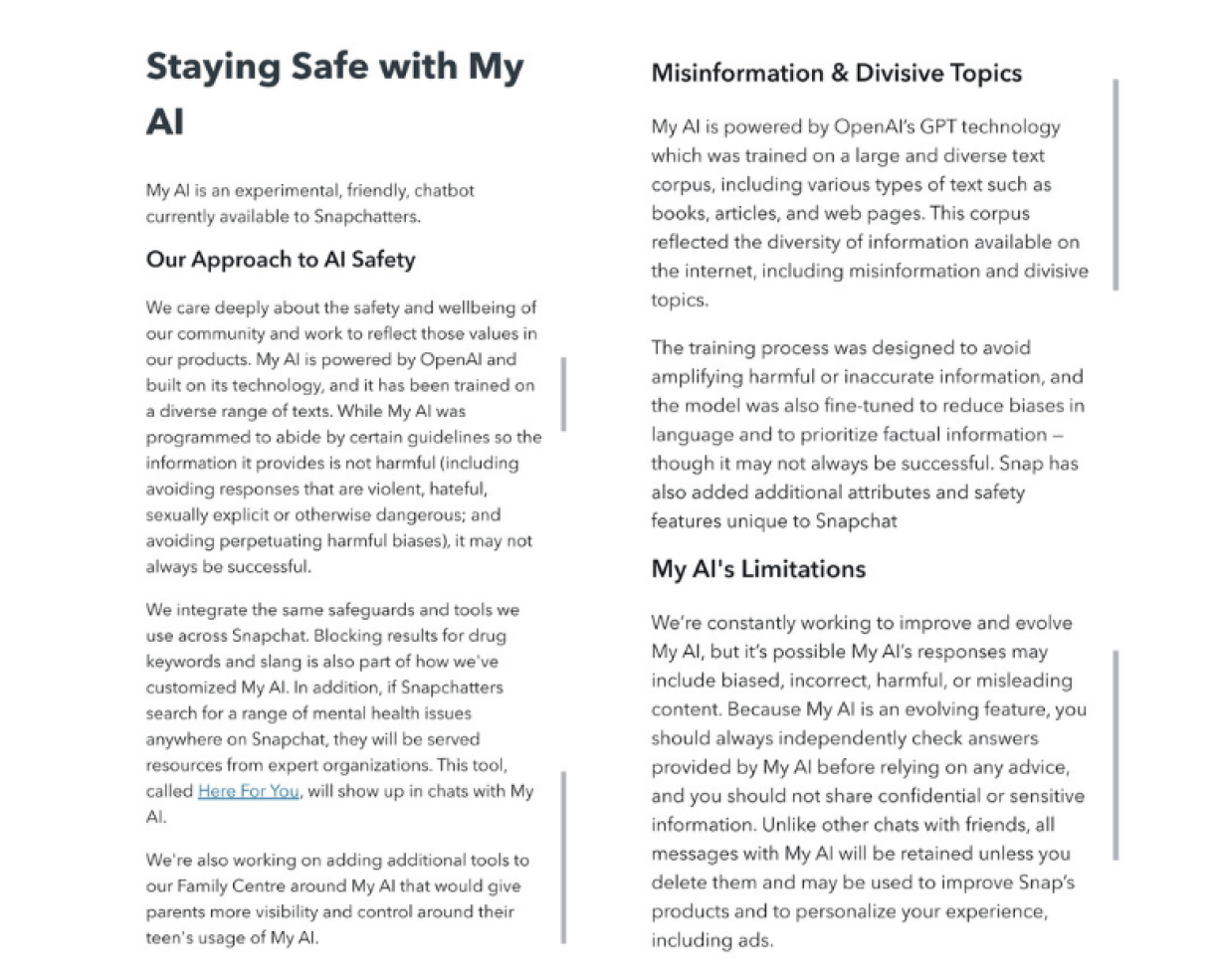

For this reason, it is important not to share any personal information with "My AI", and Snapchat even warns against sharing personal data in the terms and conditions. However, this advice conflicts with the chatbot itself, which promises users it will keep their secrets confidential.

Snapchat Support does point out that users should not take everything that "My AI" tells them at face value. As Loran says, "it is possible that the AI answers could be biased, false, harmful, or contain misleading content".

This is another risk of reliance on AI, the psychologist explains. "Users may lose their ability to think critically, because they are no longer forced to analyse sources. Furthermore, there is the risk of falling victim to plagiarism."

Neither "My AI" nor ChatGPT indicate their information sources, which can prove damaging to those relying on the service for school or university work. The risk is that users could end up submitting an existing text as their own work, thereby opening them up to the consequences of plagiarism.

Not a replacement for real friendships

A friend who never sleeps could certainly be tempting for young people; however, too much reliance on AI could prove dangerous in the absence of real social connections. "This is particularly problematic for young people," says Loran. "They know they can always come back to their virtual friendship, which could actually result in them becoming more isolated in real life."

Kanner- a Jugendtelefon helpline, while the service's website also has a chat function, where young people can connect with specialists aged 25 and under.

Generally, the expert recommends the following tips when using the "My AI" tool, or other AI chatbots:

- Never share personal information

- Speak openly to parents or guardians about using the tool

- Refrain from blindly trusting the information dispersed by the bot

Parents and guardians play a crucial role in teaching their children the correct handling of social media and chat functions such as "My AI". Loran advises parents to familiarise themselves with the apps used by their children, as often trying the apps out themselves is the only way to gain an accurate picture of their child's social media usage.

Furthermore, it is important to let young people know they can trust someone. Loran recommends building a good relationship based on trust, so a child or young person knows they have someone to turn to when they encounter problems. Forbidding actions as a punishment makes no sense, he adds. "Today's young people are so-called digital natives. They have grown up with technology and will definitely outsmart their parents."

Adults in need of advice on the above topic may also contact the KJT, which recently launched a helpline aimed at parents.